Pattern Recognition

Our pattern recognition systems offer precise high-speed detection guaranteeing that your output is always at its optimum.

Machine Vision Applications

Pattern Recognition

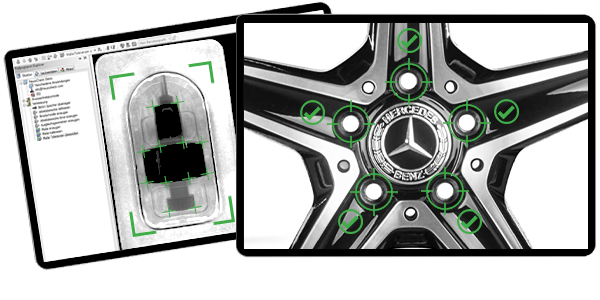

IVS® vision systems can recognise arbitrary patterns, shapes, features and logos, and enable the comparison of trained features and patterns within fractions of a second.

Quality Assurance Made-to-Measure

Typical Vision System Pattern Recognition Applications

- Verify the orientation and position of a part or feature

- Classify complex features

- Robot guidance: to locate, pick and orientate parts

- High speed location of rotated, scaled, and translated objects

- Presence verification of circuit board components

- Identification and verification of product or component types

- Location of reference points and fiducials

- Verifying the presence of products in packages

- Ensuring the correct screw head is used in an automotive assembly

Latest Generation Advanced Algorithms

Pattern Recognition Vision Systems

Machine vision pattern recognition is used to precisely find parts, verify assemblies and precisely command robots for efficient process control.

Simple and reliable systems designed by experts

Explore some of the key features and benefits of using an IVS system for pattern recognition

Locate The Most Complex Parts

Powerful algorithms ensure even the most complex of parts are found quickly and accurately.

Evaluation of Multiple Features

Results can be given in fractions of a second for class, quality, location and size.

Robot Guidance

We give robots eyes; the latest generation of 2D & 3D tools can enable your robots to find, pick and place with high precision.

Pattern Recognition Vision Systems

Powerful and fast algorithms

Our vision systems are used to find objects and easily identify models that are translated, rotated and scaled to sub-pixel accuracy. IVS® neural network tools can learn to recognise arbitrary patterns, shapes, logos etc.

High speed contour matching pattern recognition, which includes a powerful, pattern training wizard, enables the accurate and repeated set up of systems and, by checking patterns on packs, parts, sub-assemblies and components, helps to verify and identify faults.

Our vision systems can also be used to accurately determine the position of parts to direct robots, find fiducials, align products and provide positional adjustment feedback whilst precise alignment, 360 degree rotational and pivotal offset provide reliable process control.

Real-time calibration provides high-performance measuring which is easy and quick to set up, while edge detection and high-definition contour analysis provides exact matching and measuring capability. Automatic data recording, image saving and inspection reporting are included with all IVS® vision systems, providing brand and warranty protection against customer complaints or costly recalls. All data can be saved to factory information systems, SQL databases or directly on the vision system.

IVS® provides sub-pixel precision vision systems for reliable measurement and dimensionally accurate inspection. From checking simple geometrical tolerances to micron-level detection, customers can be confident that our gauging and measuring vision solutions provide robust and repeatable results. Powerful gauging wizards are incorporated into our vision systems, enabling customers to make sophisticated measurements to sub-pixel accuracy at very high speeds.

2D, 3D, Classifiers Or AI Recognition

The Technology Behind Pattern Recognition

There are a variety of ways an IVS vision system can recognise and classify patterns, these include:

- Template Matching

- Contour Matching

- Classify Regions of Interest

- AI deep learning algorithms

- 3D matching

Template matching: for the portion or component that needs to be verified, a master “template” is taught. After that, each image is compared to the master to ensure that they are identical. Geometric features can be used by the IVS® system to find an object via contour-based verification. The system can locate several models that have been translated, rotated, and scaled with sub-pixel accuracy rapidly and reliably. When a scene is subject to uneven variations in illumination, the system locates a partially absent object and continues to perform, minimising lighting requirements.

Contour matching: similar to Template matching, a master “model” is taught. Contour matching has a greater tolerance to variations of lighting, including reflections. Contour matching can find partially overlapped objects. The position, dimension and orientation detected by contour matching are more precise.

Classify Regions of Interest: this function sorts regions of interest into classes based on their characteristics using a classifier, such as a neural network. The recognition of digits or letters is a common application.

AI deep learning: in the context of industrial machine vision, AI deep learning teaches robots and machines to accomplish what people do naturally, namely, learn by example. The latest IVS® machine vision solutions replicate human brain activity when learning a task, allowing vision systems to recognise images, locate objects, sense trends, and interpret subtle changes.

3D matching: suited to more complex objects, a master “3D model” is taught, this can be a 3D CAD model or created from a gold sample production part. After that, each 3D scan is compared to the master to ensure that each 3D point is identical. Several models that have been translated and rotated can all be found in one scene. The system will compute each model’s pose and quality.

Robot Guidance

Machine Vision Pattern Recognition

Machine vision pattern recognition can be used for guidance of robots to locate, pick and orientate parts. Fluctuations in scale, noise, occlusion, production contrast and lighting, rotation, and other parameters, are all tolerated by the IVS vision software suite, which correctly locates the part every time. Objects are localised with subpixel accuracy, which means they are found even when rotated or resized.

A pattern recognition system can locate and classify touching and slightly overlapping parts, enabling the computation of coordinate and rotational information for robot guidance.

Pattern recognition can also be used for part alignment to other machine vision systems. Because parts may be presented to the camera in unpredictable orientations during production, machine vision can locate the part and then align the part to the other machine vision systems, ensuring a perfectly orientated image is captured.

Pattern recognition can be used to precisely position other vision software tools so that they interact with the part correctly, eliminating the need for hard tooling to keep parts in situ during an inspection.

More about pattern recognition in machine vision

Part Detection

The process of detecting a part or feature within the camera’s field of view is one of the most utilised tools of vision systems. Pattern recognition algorithms can provide pass/fail decisions for class, quality, location, size, etc, and can be used where robots need to be guided to pick and place products. There are many approaches to pattern recognition, each with its advantages and disadvantages.

The IVS software suite includes intuitive vision tools so users can easily refine, reteach and create models. Within one image, a pass/fail decision can be given for multiple patterns.

Deep learning AI pattern recognition vision systems can give a human-like decision for defect identification, texture and material classification, assembly verification and defect localisation, character reading, etc.

3D matching allows us to accurately compare a captured scene to a trained model using point cloud verification. Deformable surface-based matching can match products that have an acceptable level of deformation i.e. flexible products. CAD data can be used to aid the matching process and speed up development time.

The assignment of an object to one of several groups based on specified features is known as classification. For example, classifying a sequence of characters is in the correct order, i.e. abcd. The same approach can be used to verify a medical device assembly, e.g. cap, spring, needle.

How to setup machine vision pattern recognition?

- The template or model is created

- This template or model is saved as a file, allowing several models to be created and easily copied for back up purposes

- The model is then used to locate an object, or verify a pass/fail result

What values can be computed from pattern recognition?

- The matching score as a percentage,100% being a perfect match

- The position of the match in the image; X, Y and Z coordinates

- Orientation

- Scaling factor i.e. how large the match is compared to the model

- Class, e.g. cap or button head screw

The benefits of pattern recognition

- High precision level

- Intuitive machine vision software makes it simple to configure, train and evaluate parts

- Products can be located even when partially obscured by other products/items in the scene

- Parts can be found even when there are constraints with lighting and reflections on the production line