In this month’s blog, we are discussing the highly regulated field of medical device manufacturing, where precision and compliance are not just desirable—they are mandatory. The growth of the medical devices industry means there have been substantial advances in the manufacturing of more complex devices. The complexity of these products demands high-end technology to ensure each product is of the correct quality for human use, so what’s the key to producing safer, smarter medical devices? Machine vision is one of the most important technologies that allow for the achievement of precision and compliance within the required regulations. In this article, we will touch on the aspect of machine vision in medical device manufacturing and its role in ensuring precision while serving regulatory compliance.

How Machine Vision Works in Manufacturing

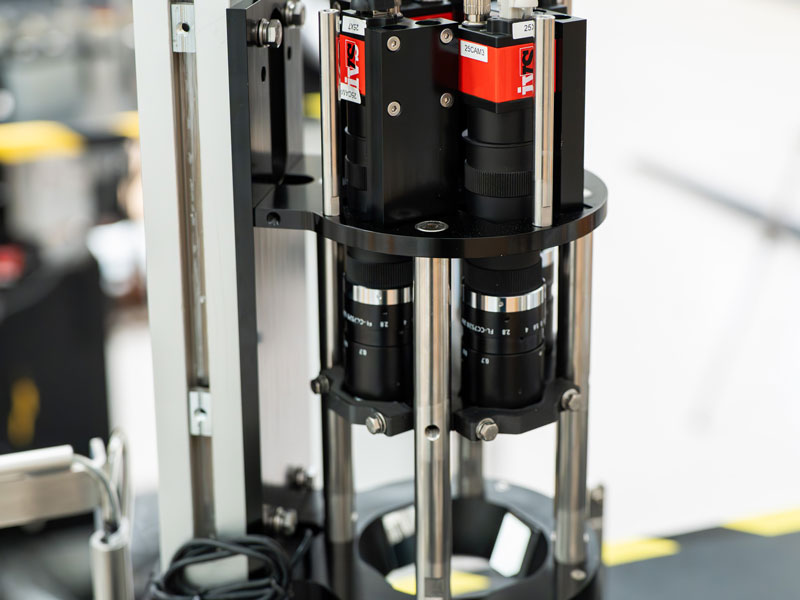

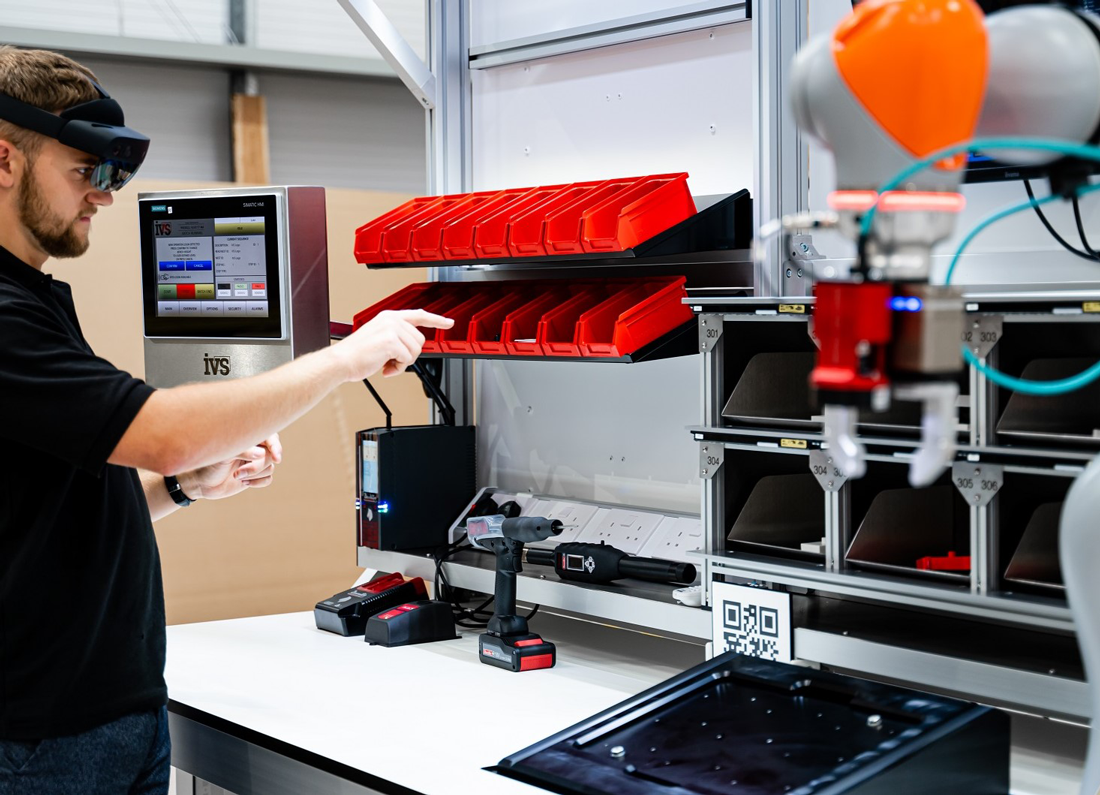

Machine vision (often referred to in a factory context as industrial vision or vision systems) uses imaging techniques and software implementation to perform visual inspections on the manufacturing line for many applications where quality control inspection is required at speed. Using cameras, sensors, and complex algorithms—Visual Perception allows machines to develop a sense of sight by capturing images with the camera(s) and processing them in order to enable decision-making from visual data.

When it comes to the medical device manufacturing process, machine vision systems play a vital role in ensuring the highest levels of precision and quality. Examples include systems that can detect the smallest imperfections, measure components with sub-micron precision, and confirm every product is produced to the tightest of tolerances—all automatically.

The Significance of Medical Device Manufacturing Accuracy

Surgical instruments, injectable insulin pens, contact lenses, asthma devices and medical syringes all need high-precision manufacturing, and this is due to several reasons. The devices not only involve patient health and safety but also must be produced at a legal level, to validated specifications. Even tiny damage or a slight deviation from those specifications can lead to the collapse of these products and hence impact human life. The drive towards miniaturisation in medical devices means that more precise parts have to be manufactured than ever before. With devices getting smaller (miniaturisation) and more complex, the margin for error decreases, hence making precision even more critical.

Machine vision systems can easily maintain precision at these levels due to the precise resolution they run at. The vision systems can use advanced imaging techniques, such as 3D vision, to measure dimensions, check alignments, and verify components are manufactured within tolerance. It makes a quality-assurance check on every unit as it comes off the assembly line to prevent defects and recalls later down the line.

Meeting Compliance Regulations

The medical device industry is heavily regulated by organizations like the FDA (Food and Drug Administration) in the United States, European Medicines Agency (EMA), and other national and international bodies that set relevant standards. Regulations govern every dimension of device manufacture, from design and production to testing and distribution.

The Current Good Manufacturing Practice (CGMP) regulations issued by the FDA draw attention to quality requirements needed throughout the manufacturing process. Machine vision systems can automatically inspect components and end products according to specifications, allowing manufacturers to validate to the relevant regulations.

Machine vision systems can produce extremely stringent inspection records with the traceability required to prove compliance against established regulatory standards. This is crucial specifically for audit purposes; if needed, manufacturers must be able to show evidence that their product was manufactured according to regulations.

Using Machine Vision in the Medical Devices Production

Each of these stages using Machine Vision to ensure precision-orientation compliance with the final product. A few of the main uses are:

• Surface Inspection: Vision systems can detect scratches, cracks, and foreign material present on the surface during the manufacturing process, which may lead to a device failure in operation.

• Dimensional Measurement: Vision metrology is used for measuring component dimensions to ensure they meet design specifications.

• Assembly Verification: Machine vision ensures that components are assembled correctly, reducing defect risk from the assembly process.

• Label Verification: Understanding which label goes on which batch is essential for compliance. Machine vision systems can inspect that labels are properly positioned and the writing on them is accurate.

• Packaging Inspection: Proper packaging ensures that devices remain sterile and protected while in transit. Machine vision systems ensure that packaging is not defective and is properly sealed.

• Code Reading: Machine vision systems check and verify datamatrix & barcodes on products and packaging to maintain correct product identification throughout the supply chain.

Optimising Efficiency and Lowering Costs

The machine vision system has more benefits and is likely to improve manufacturing not only in a precise manner but also with strict compliance while increasing the efficiency of manufacturing, resulting in reduced costs. This will help speed up the throughput from these systems, where automated inspection and measurement can be done much faster and more consistently than a team of human inspectors.

Machine vision products also reduce human error, which could cost in relation to medical device manufacturing. Where a simple mistake can cause the manufacturer to recall defective products, leading them to legal lawsuits and damaging their reputation. Catching defects early can help to minimise these risks and costs, which is why machine vision offers such a huge benefit.

Manufacturers can also use inspection data to analyse trends and uncover underlying issues before they lead to defects, allowing for higher uptime with preventative maintenance of the lines.

Future Trends in Medical Device Manufacturing and Machine Vision

As technology advances, the function of machine vision systems will continue to develop in medical device manufacturing. Here are some of the likely trends we can expect to see over time.

• Integration of Artificial Intelligence (AI) with Machine Vision Systems: AI and machine learning are increasingly incorporated into machine vision systems, further advancing image analysis and pattern recognition. This will enable even greater precision and the possibility of detecting less visible imperfections that conventional vision systems might overlook.

• 3D Vision: Where 2D vision systems are traditionally used, the third dimension is being used more. Due to cross-verification, 3D vision systems provide a complete view of the components (compared to a single-plane 2D view), offering better measurement and inspection capabilities over complex shapes and assemblies.

• High-Speed Vision Systems: The push for higher-speed vision systems goes hand in hand with the increased manufacturing speeds being implemented today, and the higher speeds of machines increase demand for accurate inspection.

• Edge Computing: With the advent of edge computing, machine vision systems can process data locally with greater power and accuracy to eliminate latency for real-time decisions. This is crucial in applications that need instant feedback to change the manufacturing process.

Conclusion

Medical device manufacturing can be significantly enhanced with the incorporation of machine vision that provides superior levels of precision and compliance. For manufacturers, it also ensures compliance with rigorous regulatory needs by automating inspections and measurements with machine vision systems. While still advancing, the necessity of machine vision in this industry is only likely to become more critical as time goes on and improvements are made in efficiency, accuracy, and overall manufacturing quality.