We are about to see a huge crossover in the application of Mixed Reality combined with automated machine vision applications. While they are two separate and distinct application areas in their own rights, we are now at a point where the two disciplines can be combined to aid automation and increase productivity in manufacturing.

What is Mixed Reality?

Mixed Reality combines the physical and digital realms. These two realities represent the opposites of a spectrum known as the virtuality continuum. This spectrum of realities is known as the mixed reality spectrum. On one end of the spectrum is the physical reality of our existence as humans. On the opposite end of the spectrum, we have digital reality. In layman’s terms, it’s the ability to mix what you perceive and see in front of you with a digital map or projection into your line of sight. So it’s the ability of a system to display digital objects as if they existed in the real world. You can imagine the heads-up displays (HUD) from fighter jets as the original pre-cursor to the current mixed reality that allowed pilots to see a projection in front of them. The difference is that mixed reality is now carried with the user, providing a real-time and immersive experience. Mixed reality experiences result from serious technical advances in the last few years.

What is Mixed Reality in production?

Let’s drill down on the hardware – one of the key players in this market is the Microsoft HoloLens 2 headset. This mixed reality unit is designed to operate untethered (no cables) from the control. It’s a self-contained holographic device which allows industrial applications to be loaded directly into the unit, thus allowing for complex mixed reality industrial operations. It has a see-through display; this allows the user to see the physical environment while wearing the headset (as compared to virtual reality).

An operator, technician or engineer will typically put on the unit at the start of a shift. It has a screen in front of the line of sight. This allows for instructions, graphics, prompts and signs to be projected directly onto the engineer’s vision allowing a mixed reality industrial environment. The unit also has multiple camera units for eye tracking and can broadcast the video the user sees to a remote location. All these aspects are combined to give a mixed and augmented reality experience.

How can Mixed Reality be applied in industry?

Mixed reality can be applied in several different ways. Let’s drill down on some of the application areas it could be used for.

– Task Guidance. Imagine you have a machine on the other side of the world and this machine has a fault. Your engineers can assist remote workers by walking them through issues as if standing in front of the device themselves.

– Remote Inspection. The ability to inspect a remote area for maintenance, damage or repair requirements. It reduces the cost of quality assurance and allows experts to assess issues from a distance immediately.

– Assembly Assistance. The ability to assist remote workers by walking through complex tasks using animated prompts and step-by-step guides. The ability to project drawings, schematics and 3D renders of how the assembly will look will help to facilitate mixed reality use in manufacturing.

– Training. Industrial training courses can be integrated directly onto the mixed reality headset. This is especially important when manufacturers rely on transitory contract workers who need training repeatedly.

– Safety. Improvement in health and safety training and situational awareness. Project prompts and holograms relating to safety in the workplace to guide workers in factory environments.

Mixed Reality assembly and machine vision.

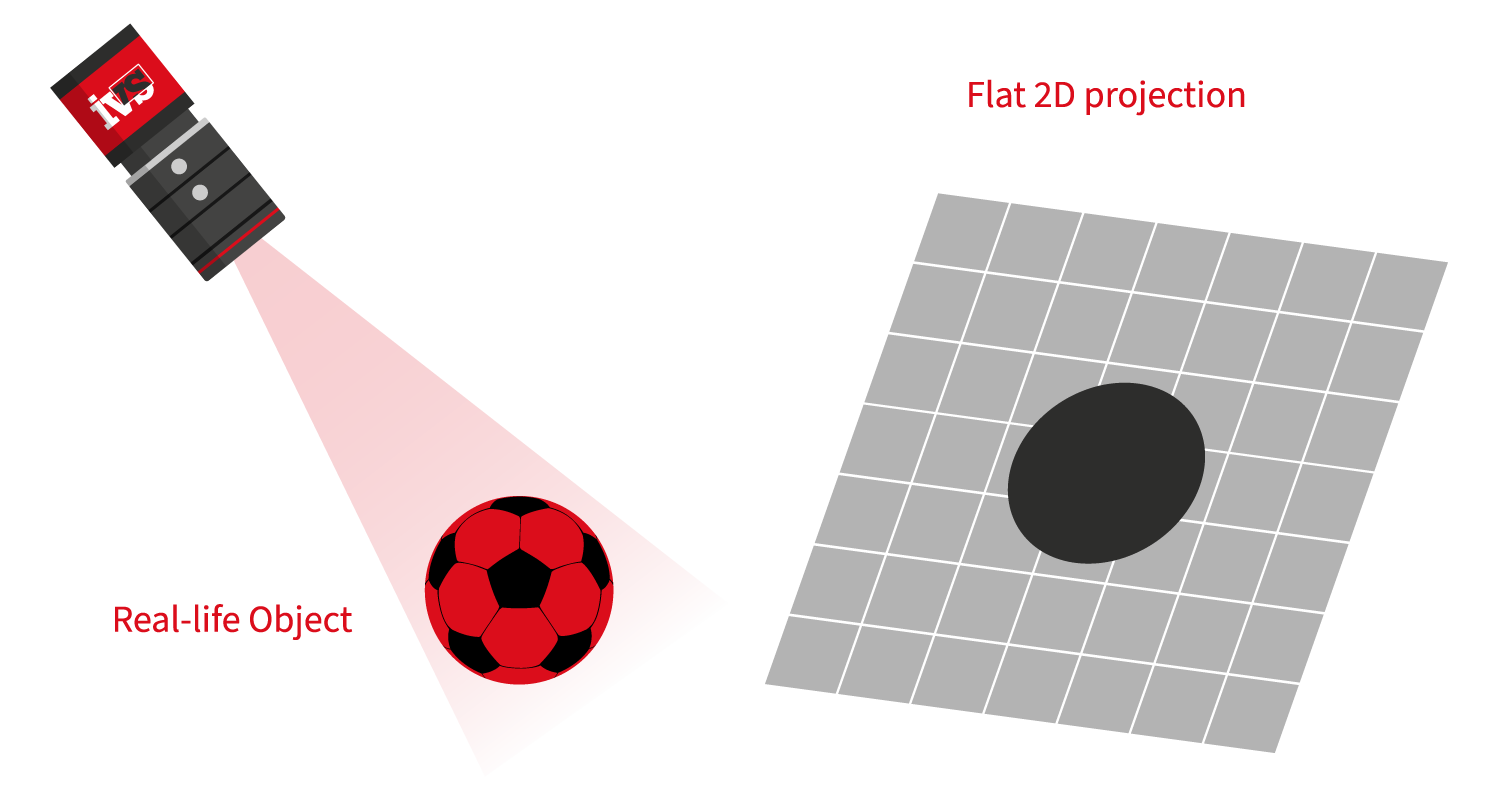

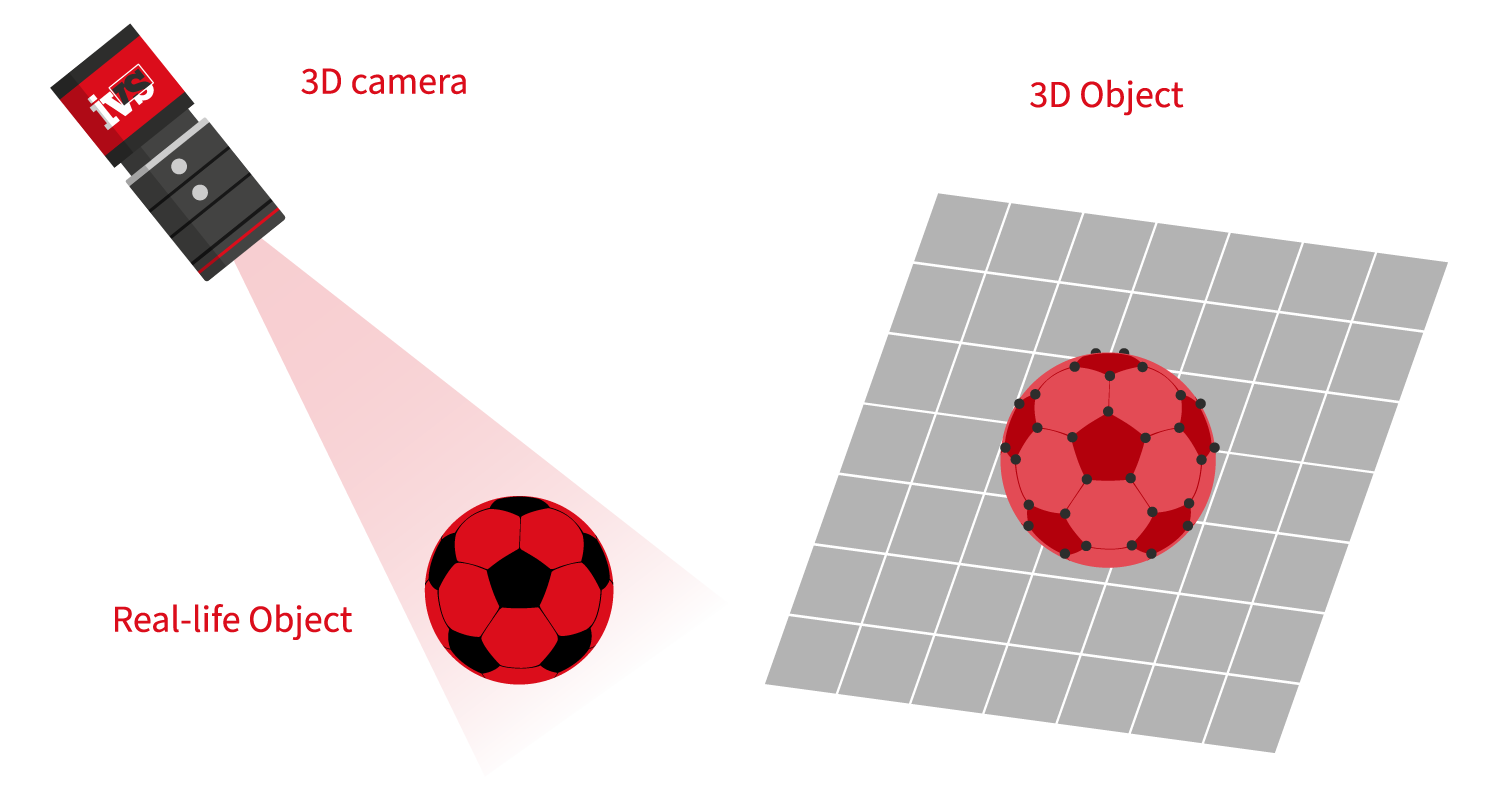

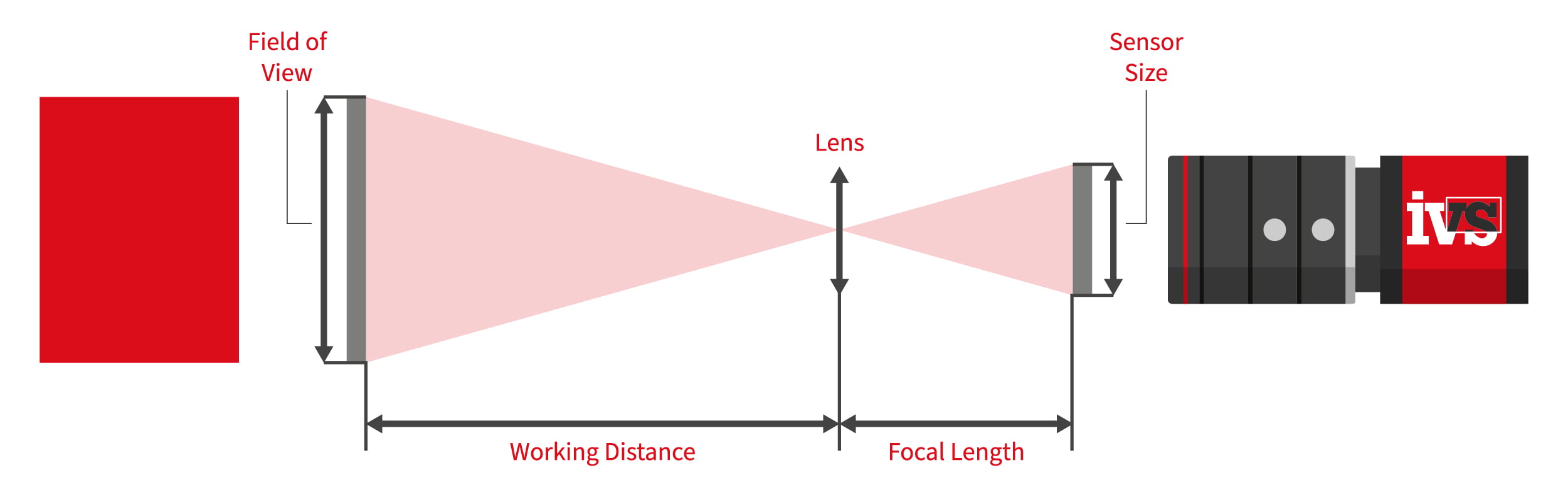

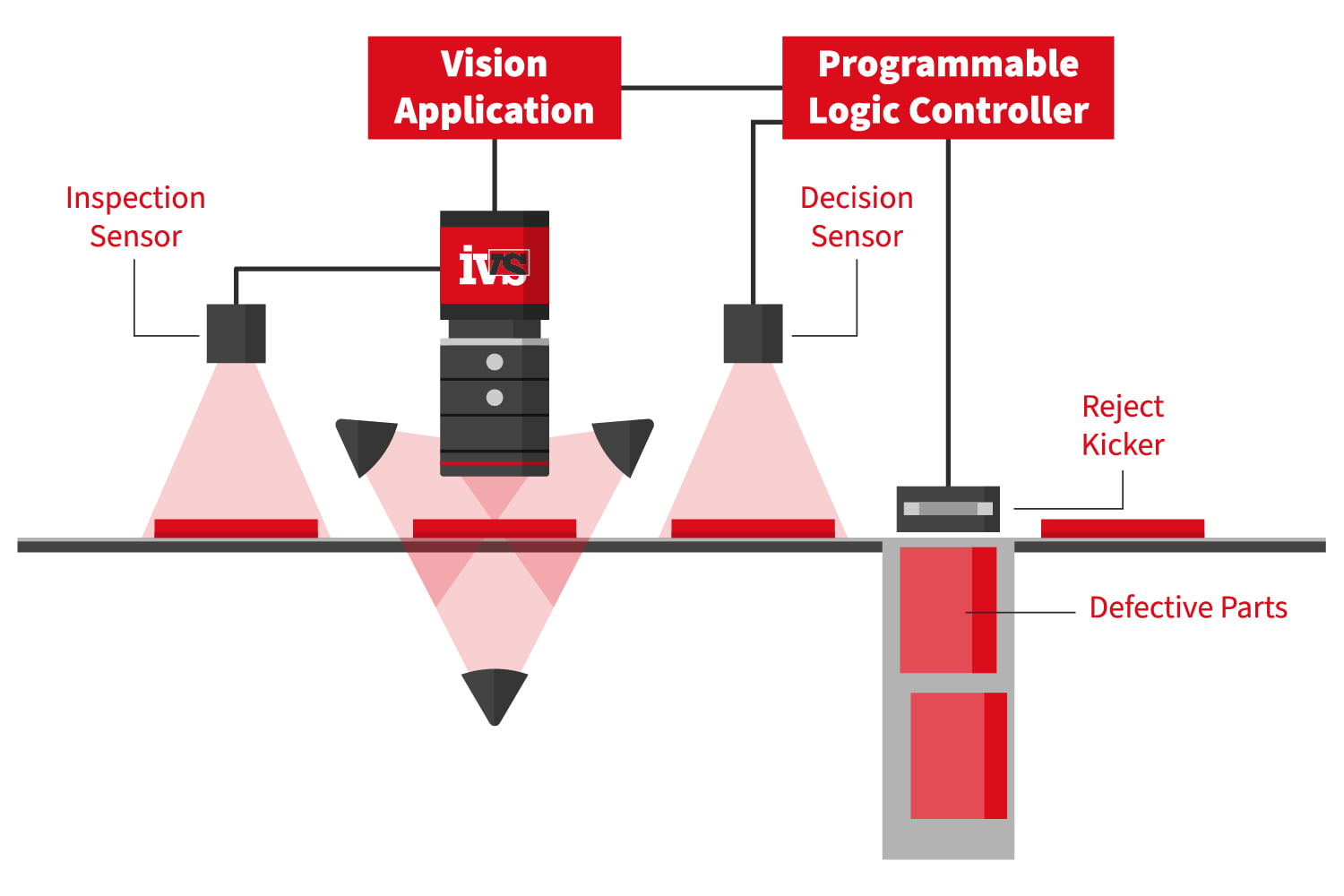

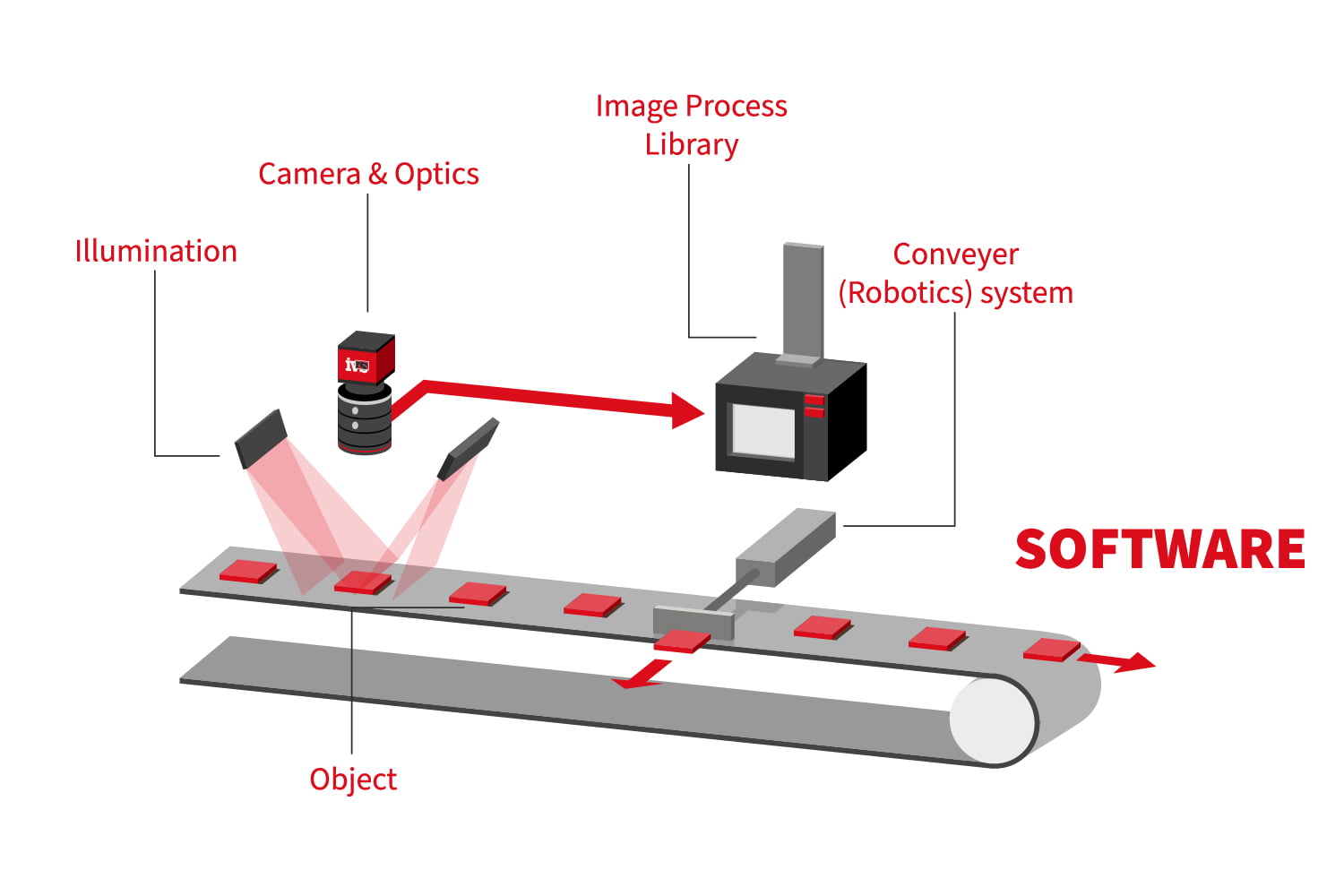

Machine vision in industrial manufacturing is primarily concerned with quality control, i.e. verifying a product through automated visual identification that a component, product or sub-assembly is correct. This can be related to measurement, presence of an object, reading a code or verifying print. Combining augmented mixed reality with the automated machine vision operation provides a platform to boost productivity.

Consider an operator/assembly worker sitting in front of a workbench with wearable tech such as the HoloLens. An operator could be assembling a complex unit with many parts. They can see the physical items around them, such as components and assemblies. Still, they can also interact with digital content, such as a shared document that updates in real-time to the cloud or instruction animations for assembly. That is, in essence, the promise of mixed reality.

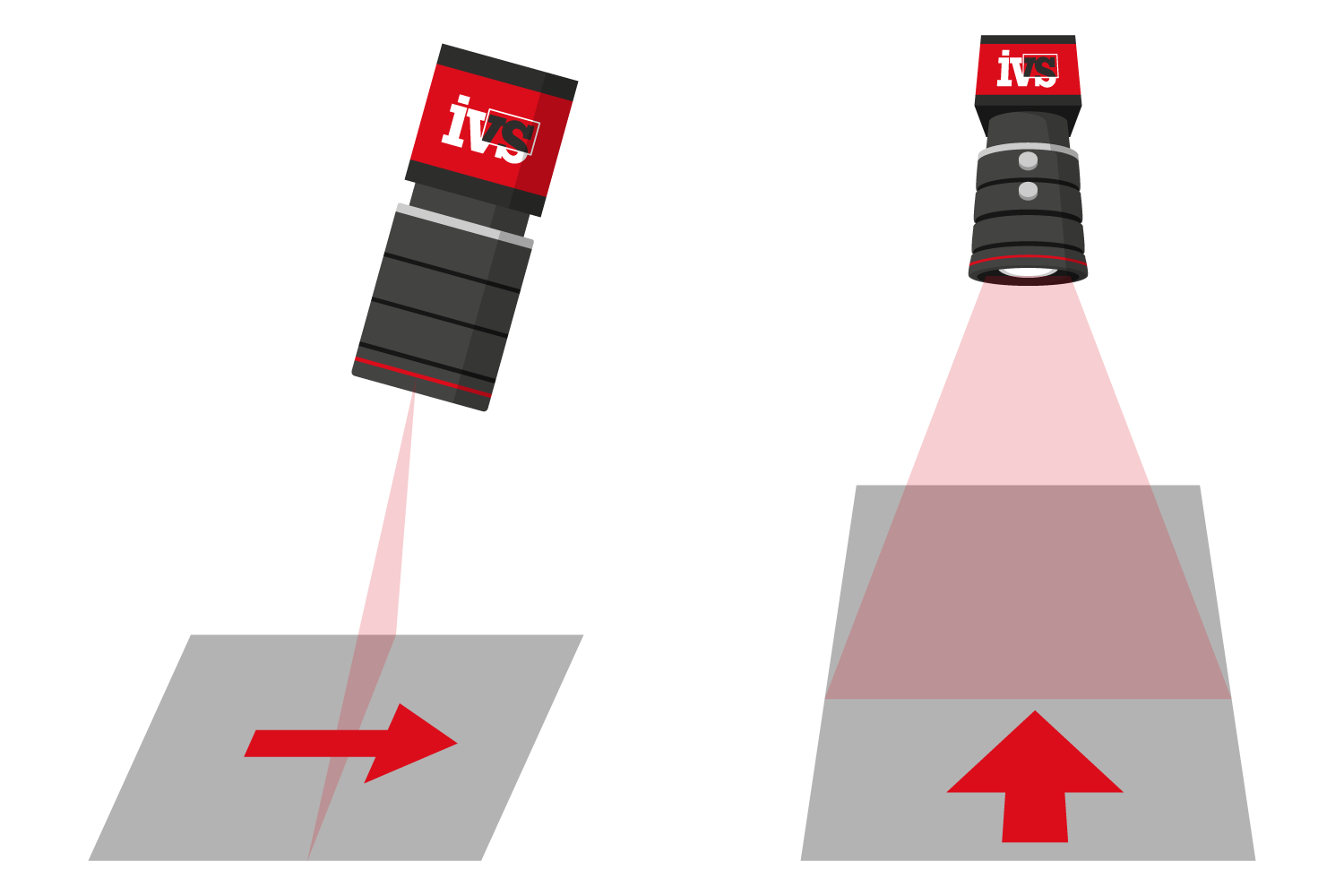

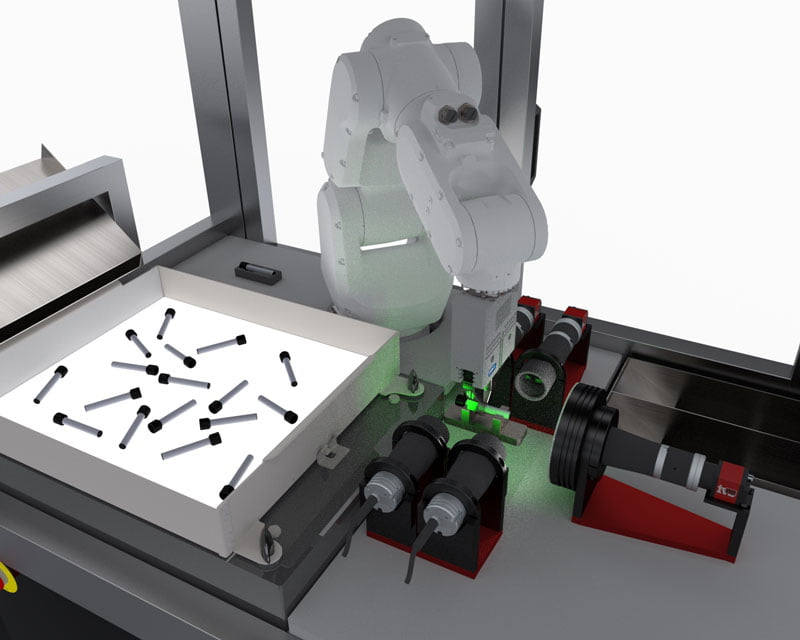

The mixed reality unit provides animated prompts, 3D projections and instructions to walk the operator step-by-step through the build. With this, a machine vision camera is mounted above the operator, looking down on the scene. As each sequence is run and a part assembled, the vision system will automatically inspect the product. Pass and fail criteria can be automatically projected into the line of sight in the mixed reality environment, allowing the operator to continue the build knowing the part has been inspected. In the case of a rejected part, the operator receives a new sequence “beamed” into their projection, with instructions on how to proceed with the failed assembly and where to place it. Now the mixed reality is part of the normal production process when combined with machine vision inspection. Data, statistics and critical quality information from the machine vision system are all provided in real-time in front of the operator’s field of view.

What’s the future of Mixed Reality and Machine Vision?

Imagine the engineering director walking the production line. Though now he wears a mixed reality unit, each machine vision system has a QR code “anchor” on the outside of the machine. By looking in that direction, the engineering manager has all the statistics beamed in front of him of the vision system operation. Yield, parts inspected, reasons for failure, real-time data and even an image of the last part that failed through the system. Data combined with graphical elements all allow better control of yield and ultimately the factory’s productivity. Machine vision systems will have integrated solutions for communication directly with wearable technology. Coupled with this, some integrated vision system solutions will have specific needs for operators to direct image capture for artificial intelligence image gathering.

And perhaps ultimately, such a mixed reality unit becomes part of the standard supply of a machine vision inspection machine to help with not just the running of the machine but to facilitate faster back-up, maintenance and training of such machine vision systems.